BabyZen is an application to monitor important quantities defining the environment of a baby with an advanced general purpose sensor board in form of a flexible Texas Instruments BoosterPack, which is fully compliant with the official design guidelines. Our manually etched and soldered PCB comprises high precision sensors to measure temperature, humidity, ambient light, acceleration and barometric pressure. It also features an ADC, which supports up to four additional external analog sensors.

The ultra low-power BoosterPack is stacked onto the CC3200 LaunchPad, which preprocesses the sensor data and sends it to a server via HTTPS. Subsequently the data is stored in a database and analyzed by a machine learning framework to detect correlations between environmental conditions and the baby's well-being. An integrated mobile application provides the parents with visualizations and recommendations on how to their baby's life

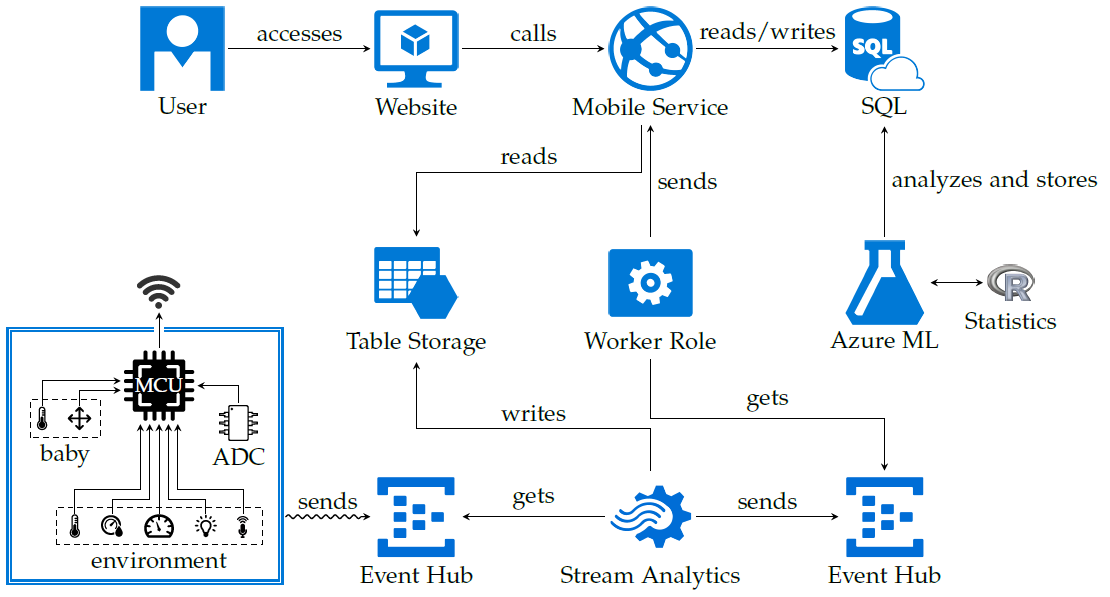

We already created a great cloud architecture previously, but for the final prototype of the device we refined it even more. Now the architecture looks as follows.

Most notably we use Azure Stream Analytics to preprocess the data. The preprocessed data is then stored in some intermediate storage, where the retention time is chosen according to the volatility of stored data. For data that has been averaged over the timespan of a minute, we keep the data for a week. Our Stream Analytics job also has a passthrough query, which directs some parts of the (validated) data to an "internal" Azure Event Hub. An internal Event Hub is one, which is only accessible from other parts of our cloud architecture, not externally.

We use the internal Event Hub to enable viewing currently streamed data. This can be integrated into any app over our web API. The web API is still using Azure Mobile Services. The real-time part has been implemented using SignalR. SignalR is also transporting the data from the internal Event Hub to the API. This solution has no scaling problems at all. We can bring in more API instances, more Event Hubs and other things. The dependencies are all loosely-coupled and can scale if demanded.

We also created a short video showing the whole construction process of the device. We start by initial discussions about the principle usage of sensors and continue with writing the device's software. Finally we etch, solder and wire the PCB. We conclude with a short demo of the created prototype. In the demo we showcase the fully functional sound sensor.

In the next couple of weeks I will release some interesting parts of the project, which could be useful to others, as open-source projects. These parts include, but are not limited to, the simulator (adapted to fit general purpose needs, but still tailored for usage with Azure Event Hubs), the report LaTeX template (much better looking and more versatile than the available Word file) and a great scale-out version of our real-time communication scheme, involving "internal" SignalR clients.